AI Fraud Detection: How Community Banks Can Afford What the Big Banks Built

Enterprise-grade AI fraud tools are now accessible to community banks under $1B. Here's what's working, what it costs, and where to start in 2026.

Fraud losses across financial services hit $12.5 billion in 2024, up 25% year over year. Community banks absorbed a disproportionate share of that pain — not because they’re targeted more often in absolute terms, but because every dollar lost hits harder when your margins are thinner and your fraud team is a person, not a department.

Here’s what changed: the AI-powered fraud detection tools that JPMorgan spent billions building are now available as managed services priced for institutions with $500 million in assets. The technology gap between a $3 trillion bank and a $500 million bank has never been smaller. The question isn’t whether community banks can afford AI fraud detection. It’s whether they can afford to keep running rules-based systems while fraudsters use generative AI to scale attacks.

The Threat Has Changed. Your Defense Hasn’t.

More than 50% of fraud attempts now involve artificial intelligence in some form. That’s not a projection — it’s a 2025 reality documented across multiple industry reports.

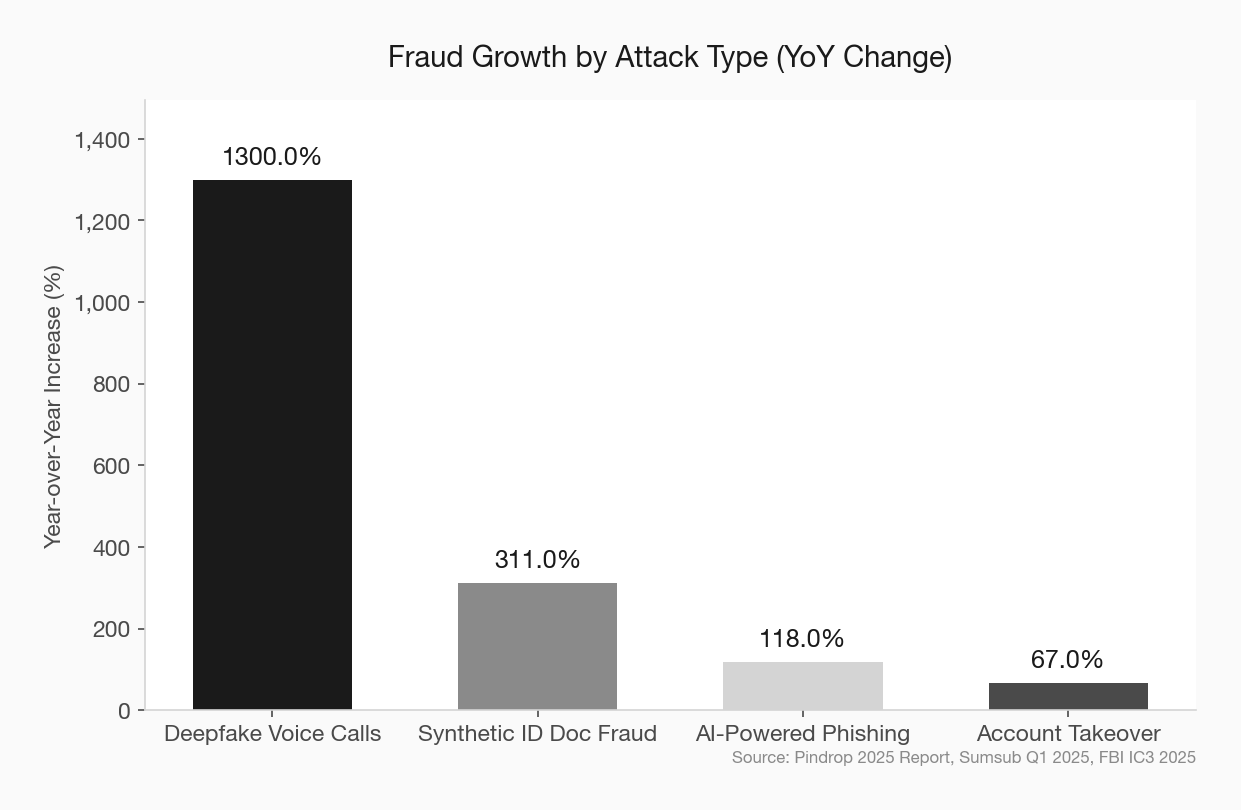

The fraud hitting community banks today doesn’t look like the fraud from three years ago. Deepfake voice calls rose 1,300% year over year according to Pindrop’s 2025 data. Synthetic identity document fraud increased 311% between Q1 2024 and Q1 2025. AI-generated phishing messages are nearly indistinguishable from legitimate communications.

Community banks are not too small to be targeted. They’re targeted because they’re small. As fraud strategist Frank McKenna put it: “Fraudsters are targeting credit unions and smaller community banks because they know that they have not invested in the sophisticated technology that the bigger banks have.”

The 2025 CSBS Annual Survey confirms that community bankers know this. Cyberattacks and bank fraud ranked as the top two concerns among surveyed community bankers — ahead of labor costs, the federal deficit, and inflation. And 66% of community bankers identified fraud detection as the single most impactful use case for AI, higher than any other banking segment.

The awareness is there. The action is lagging.

What the Big Banks Built — And Why It Matters to You

JPMorgan Chase’s AI fraud detection infrastructure has generated nearly $1.5 billion in cost savings. Wells Fargo deployed deep learning algorithms that compare every transaction in real time against a massive database of known fraudulent patterns. HSBC built machine learning models that analyze transactions in real time to separate legitimate activity from money laundering.

These systems share common traits: they ingest enormous volumes of transaction data, learn continuously from new patterns, reduce false positives dramatically, and operate in real time. The result is fraud caught faster, fewer legitimate transactions flagged, and lower operational cost per investigation.

Community banks couldn’t touch this technology five years ago. The infrastructure cost alone was prohibitive. You needed data scientists, dedicated servers, and a seven-figure annual budget just to get started.

That barrier collapsed. community bank AI tools overview

The Democratization of AI Fraud Detection

Three structural shifts made enterprise-grade fraud AI accessible to community banks:

Cloud-native delivery. AI fraud platforms now run entirely in the cloud. No on-premise hardware. No dedicated IT staff to maintain models. You’re paying for a service, not building an infrastructure. Vendors like Feedzai, Sardine, and Alloy deliver their platforms as managed services that scale with your transaction volume.

Embedded AI from core providers. CSI, Jack Henry, and FIS have embedded AI fraud detection capabilities directly into their core banking platforms. For the 60%+ of community banks running on one of these cores, AI fraud detection may already be available — or one upgrade cycle away. This is the lowest-friction path for most community banks.

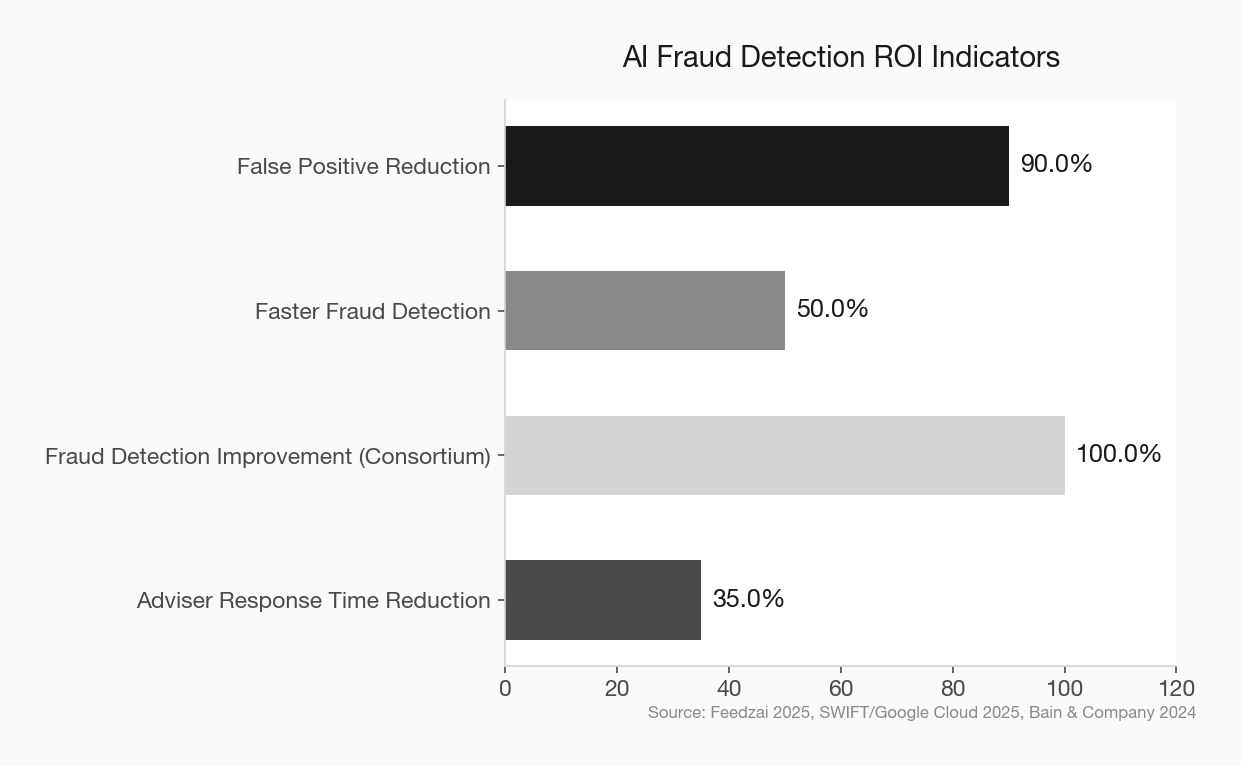

Consortium models and federated learning. This is the most consequential shift. SWIFT piloted a federated learning approach with Google Cloud and 13 global financial institutions in 2025. Each bank trains a shared AI model on its own local data. Only the model improvements — not the underlying data — get shared. The result: a fraud detection model trained on synthetic data from ten million transactions was twice as effective at identifying known fraud patterns compared to a model trained on a single institution’s data.

The implication for community banks is profound. You don’t need JPMorgan’s transaction volume to benefit from JPMorgan-scale pattern recognition. Consortium-based models let smaller institutions pool their collective intelligence without exposing customer data. DataVisor already offers this for community and regional banks through its shared fraud intelligence network.

What’s Actually Working Right Now

Forget the vendor pitches. Here’s what’s producing measurable results at institutions that look like yours.

Real-Time Transaction Monitoring

AI models that score every transaction in real time — debit, credit, ACH, wire, Zelle — and flag anomalies before they clear. Financial institutions using AI anomaly detection report up to 90% reduction in false positives and 50% faster fraud detection. For a community bank running a three-person operations team, cutting false positives by even half means your people are investigating real threats instead of chasing legitimate transactions.

Deepfake and Voice Clone Detection

Michigan State University Federal Credit Union partnered with Pindrop to deploy voice authentication and deepfake detection in its call center. Between August 2024 and September 2025, the 367,000-member credit union caught $2.57 million in fraud exposure from deepfake calls alone — roughly $200,000 per month in avoided losses. MSUFCU isn’t a megabank. It’s a mid-size credit union with a focused investment in the right tool for a specific, escalating threat.

Document Fraud Detection

Inscribe’s data shows that 1 in 16 documents submitted to financial institutions now shows signs of fraud, with AI-generated and template-based document fraud increasing 5x between April and December 2025. AI document verification tools catch manipulated pay stubs, fabricated tax returns, and synthetic bank statements that human reviewers consistently miss — especially under volume pressure during lending cycles. AI loan processing case study

Behavioral Biometrics

Sardine and similar platforms analyze how a user interacts with their device — typing speed, mouse movement, session behavior — to detect account takeover attempts in real time. This layer catches fraud that transaction monitoring alone misses, particularly in digital banking channels where community banks are most vulnerable.

What It Actually Costs

Let’s talk numbers. The range depends on your approach:

Embedded core provider AI: If your core already offers AI fraud capabilities (CSI, Jack Henry, FIS), the incremental cost may be bundled into your existing contract or available as a modest add-on. This is the cheapest path, and for many community banks, the right starting point.

Managed service platforms (Feedzai, Alloy, Sardine, DataVisor): These typically price on transaction volume or asset size. For a community bank under $1 billion, expect annual costs in the low-to-mid six figures — not the seven-figure commitments these platforms required five years ago. Some vendors offer tiered pricing specifically designed for community and regional banks.

Point solutions (Pindrop for voice, Inscribe for documents): These address specific fraud vectors at lower cost than full-platform deployments. MSUFCU’s Pindrop implementation paid for itself within months based on avoided losses alone.

Full platform overhaul: A comprehensive AI fraud detection buildout runs $2M–$10M at larger institutions. Community banks don’t need this. The managed service model exists precisely so you don’t have to build what JPMorgan built.

The real cost calculation isn’t what you spend on AI. It’s what you’re losing without it. When 67% of financial institutions reported rising fraud rates in 2025, and 41% identified real-time payment fraud as their biggest exposure for 2026, the cost of inaction has a number attached to it — you just haven’t calculated it yet. fintech partnership evaluation checklist

Where to Start: A Three-Step Framework

Community banks don’t need a transformation. They need a starting point.

Step 1: Audit your current fraud stack. What’s rules-based? What’s manual? Where are your false positive rates highest? Where did you take the most losses last year? The 2025 CSBS data says 59% of community bank fraud cases are credit and debit card fraud, and 39% of dollar losses come from that category. Check fraud accounts for 30% of losses. Start where the bleeding is worst.

Step 2: Ask your core provider what’s available. Before you evaluate third-party vendors, find out what your existing core offers. CSI’s 2026 survey shows fraud detection is a priority use case for their platform. If your core has AI capabilities you’re not using, that’s the fastest win. If your core’s answer is “it’s on the roadmap,” that tells you something too.

Step 3: Pick one use case and deploy. Don’t try to boil the ocean. If deepfake calls are hitting your call center, deploy voice authentication. If document fraud is spiking in your lending pipeline, add document verification AI. If your false positive rate is burning operations time, upgrade your transaction monitoring. One well-chosen AI tool, deployed in 90 days, will teach you more than a year of strategic planning. digital transformation reframed

The Regulatory Tailwind

Community bankers often cite compliance uncertainty as a reason to delay AI adoption. The regulatory picture is actually clearer than it’s been in years.

The NCUA hired three dedicated AI officers for 2025-2026 and published a comprehensive AI compliance framework. The OCC and FDIC have signaled support for responsible AI adoption, particularly in fraud prevention and BSA/AML compliance. Examiners are increasingly asking not just whether you’re using AI responsibly, but whether you’re addressing AI-powered fraud threats at all.

The regulatory risk is shifting. It’s no longer just about the risk of adopting AI. It’s about the risk of not adopting it while AI-powered fraud scales around you. compliance and innovation balance

The Consortium Opportunity Community Banks Should Be Talking About

The most underappreciated development in AI fraud detection isn’t a product — it’s a model.

SWIFT’s federated learning pilot proved that institutions can train collectively on fraud patterns without sharing raw data. The model trained across 13 institutions detected twice as many known fraud patterns as any single institution’s model alone.

Now apply that principle to community banking. Imagine 200 community banks contributing anonymized fraud signals to a shared model. No individual bank has enough transaction volume to train a sophisticated AI model alone. Together, they have a dataset that rivals a top-20 bank.

This isn’t theoretical. DataVisor already operates a consortium model for community and regional banks. The Bankers’ Banks and correspondent networks that already serve community banks are natural vehicles for shared fraud intelligence. The infrastructure exists. The question is whether community banking trade groups and networks will move fast enough to organize it. community bank competitive advantages

The Bottom Line

Fraud isn’t a problem you can staff your way out of anymore. When AI-generated deepfake calls are hitting credit union call centers at $200,000 a month in attempted fraud, and synthetic identities are scaling at 311% year over year, the rules-based systems that served community banks for decades are structurally overmatched.

The good news is specific and current: managed AI fraud platforms are priced for community banks, core providers are embedding AI capabilities into existing systems, and consortium models are making collective intelligence available to institutions that could never build it alone.

The 66% of community bankers who identified fraud detection as AI’s top use case aren’t wrong. They’re just not moving fast enough. The banks that deploy one AI fraud tool this quarter — not next year, not after the next board meeting — will be the ones that catch $2.57 million in deepfake fraud before it clears, not after.

The technology is ready. The pricing is accessible. The only thing left is the decision.