The Chatbot Trap: What Community Banks Get Wrong About AI Customer Service

Most community bank chatbots frustrate customers and deflect to a phone number. The banks getting it right use AI to escalate, not deflect. Here's the difference.

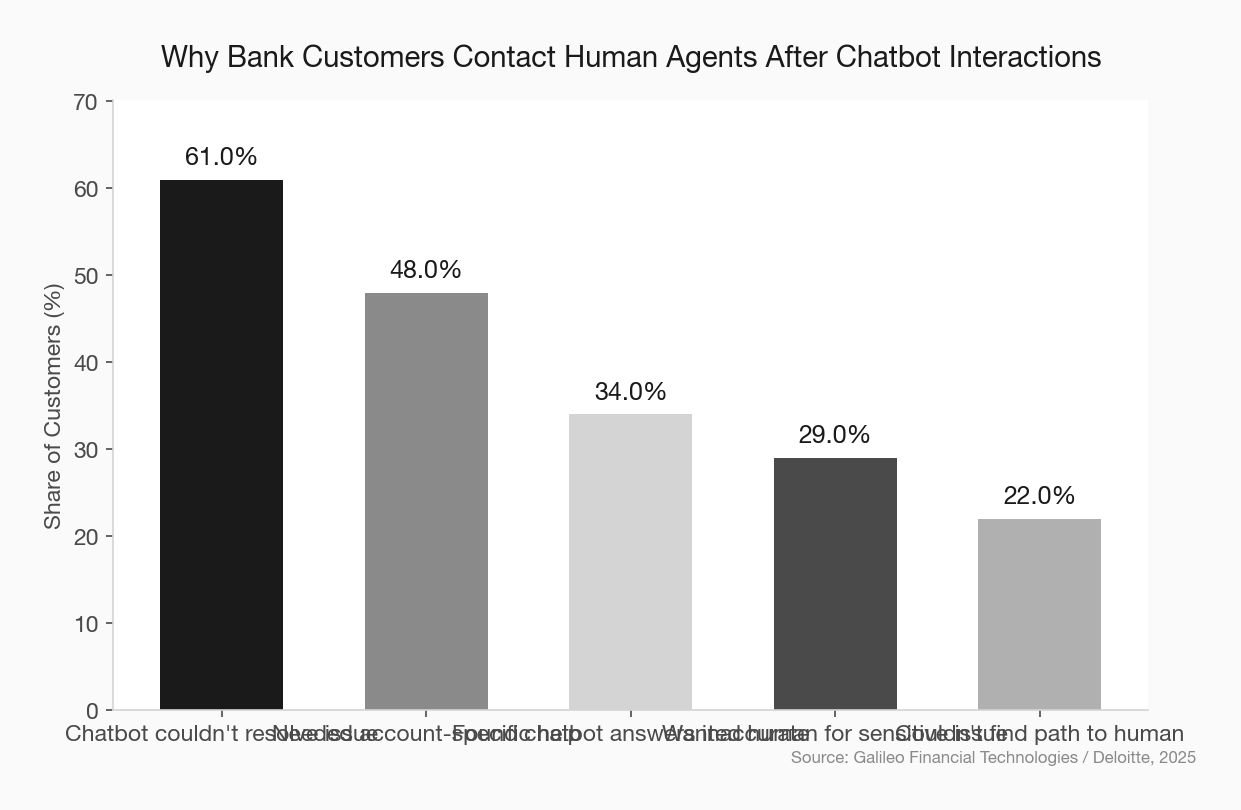

Eighty percent of consumers report feeling more frustrated after interacting with a bank chatbot than before they started. Not ambivalent — more frustrated. And 78% still needed to reach a human anyway.

That’s not an AI problem. That’s a design problem. And the community banks that understand this distinction will build something far more valuable than a cost-reduction tool.

The Bot Most Banks Built

Let’s describe the chatbot most community banks have deployed — or are considering.

A customer lands on the website with a question about why their business checking account was charged a fee. They click the chat bubble. The bot says: “Hi! I’m here to help. What can I assist you with today?”

They type their question. The bot suggests three FAQ links. None of them answer the question. They type again. The bot says: “I’m having trouble understanding your request. Would you like to speak with a representative?”

They click yes. The bot gives them a phone number and tells them business hours are Monday through Friday, 9 to 5. It’s Saturday afternoon.

The customer closes the tab. They’re angry. And the bank just lost the chance to handle a straightforward inquiry that a well-trained AI agent could have resolved in 45 seconds.

The CFPB has a name for this experience: a doom loop. In its research on chatbots in consumer finance, the bureau documented complaint after complaint about customers getting stuck in circular flows — circular answers pointing them toward FAQs, inaccurate information, and no path to a human. The CFPB explicitly warned that financial institutions “risk violating legal obligations, eroding customer trust, and causing consumer harm when deploying chatbot technology” this way.

That warning landed in 2023. Most community bank chatbot experiences haven’t changed.

Why the Default Is Deflection

The chatbot most banks build is optimized for one metric: containment rate. That’s the percentage of chats the bot “resolves” without escalating to a human agent.

On its face, containment sounds good. Fewer escalations mean lower staffing costs. But containment is a false measure when the bot closes the conversation without actually helping the customer. The bank counts it as resolved. The customer counts it as abandoned.

community bank customer experience strategy

This metric-driven deflection mentality comes directly from how chatbot vendors sell. The pitch is always cost savings — “automate 60% of your inquiries and reduce call volume.” That’s not wrong as an outcome, but when it becomes the primary design goal, the experience suffers.

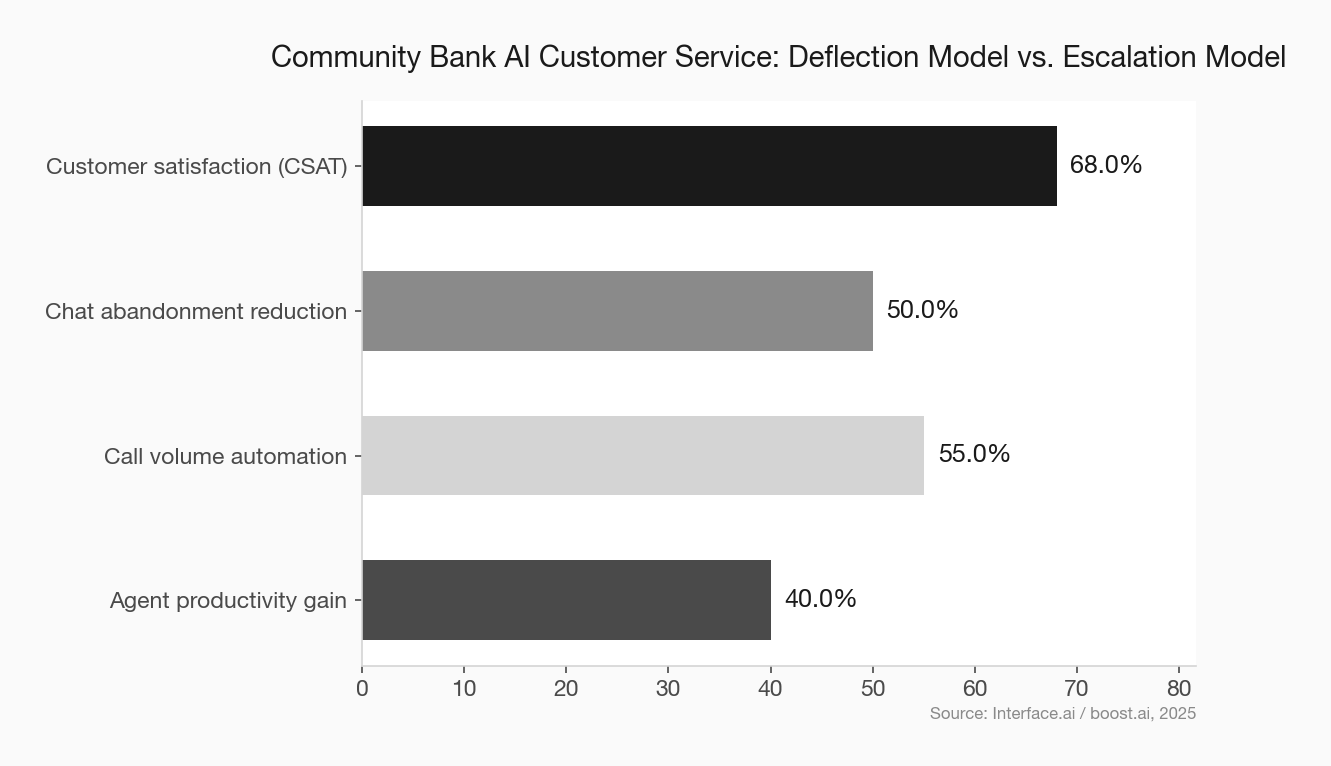

The banks getting this right aren’t building bots to keep customers out of the phone queue. They’re building bots to get customers to the right place — faster and with more context — than the phone queue ever could.

That’s a different philosophy. And it produces a different product.

The Escalation-First Design Model

Think about what a good junior banker does at the front desk. When a customer walks in with a question the banker can answer, they answer it. When they can’t — or when the situation requires judgment, sensitivity, or account access — they walk the customer to the right person and provide a warm handoff. They don’t send the customer to a FAQ board and point at the door.

That’s what a well-designed AI agent does.

The difference between a chatbot that deflects and one that escalates intelligently comes down to a few design decisions:

Intent detection depth. A basic bot pattern-matches keywords. An intelligent agent understands what the customer is actually trying to accomplish — and recognizes when the request is outside what it can reliably handle. This distinction requires a more sophisticated language model, but that technology is no longer exotic or expensive.

Sentiment and urgency signals. When a customer is upset — when their language shifts, when they repeat themselves, when they explicitly express frustration — an intelligent agent detects that and prioritizes escalation. The goal isn’t just efficiency; it’s recognizing when a human touch is required.

Context preservation at handoff. The single most frustrating part of chatbot escalation, per virtually every piece of consumer research on the topic, is being asked to repeat yourself to a human agent. A well-built system passes the full conversation context to the agent. The human picks up mid-conversation, not from scratch.

Graceful handoff to alternatives. If a live agent isn’t available, the bot shouldn’t dead-end. It should offer a callback, a secure message thread, or an appointment — and follow through.

What the Community Bank Advantage Actually Is

Here’s where this gets interesting for community banks specifically.

The mega-banks — Bank of America, Chase, Wells Fargo — have invested hundreds of millions in AI customer service infrastructure. Bank of America’s Erica has logged over 3 billion interactions. These are genuinely capable systems built at enterprise scale.

Community banks can’t replicate that. But they don’t need to.

The community bank brand promise is human relationships. That promise only becomes credible if the digital experience actually honors it. A chatbot that feels impersonal, dismissive, or circular actively undermines the brand. It says: we don’t really care, we just want you to go away.

When a community bank’s AI agent quickly recognizes it can’t help with something, passes context to a named local banker, and that banker calls back within the hour — that’s not just a good chatbot. That’s a brand moment. It’s a demonstration of the relationship that a neobank literally cannot replicate.

The bar for community banks isn’t “build what Bank of America has.” The bar is “use AI to make your humans faster and more context-aware.” That’s an achievable goal with today’s technology.

community bank customer relationship strategy

The Vendors Worth Knowing

A small set of AI platforms have built specifically for community banks and credit unions, which matters because a generic enterprise chatbot builder won’t understand your core system integrations, your compliance requirements, or your community banking context.

Interface.ai is the most prominent example. Their BankGPT platform, launched in late 2025, is deployed across close to 100 community financial institutions and handles roughly 1.5 million conversations daily. Credit unions using their Voice AI have reported 40–60% automation of incoming calls while cutting call wait times by up to 80%. Critically, their escalation model is designed around context preservation — agents get the full conversation before picking up.

Eltropy offers a unified conversation platform that handles chat, SMS, and voice with a similar escalation-first philosophy. Several credit unions have used it specifically to address the doom loop problem — building in explicit rules about when AI defers to humans rather than keeping customers in automated flows.

The common thread: these platforms are built for institutions that compete on relationships. Their default isn’t containment. It’s resolution.

The Implementation Mistake to Avoid

Most community banks that deploy a chatbot do it in one of two ways: they let the core vendor bundle something in, or they pick a generic tool based on price.

Neither approach results in an escalation-first design. Core vendor chatbots are typically built around FAQ databases. Generic tools require significant customization to integrate with core systems — and without that integration, the bot can’t pull account data, which means it can’t do anything useful.

Before signing any AI customer service contract, ask three questions:

- What does the escalation path look like — what context is passed to the human agent?

- What triggers escalation — is it just explicit customer requests, or does it detect sentiment and urgency signals?

- How does the bot perform when it doesn’t know the answer — does it acknowledge uncertainty or confabulate a response?

If a vendor can’t answer question three clearly, walk away. A bot that makes things up is worse than no bot at all, particularly in a regulated industry where inaccurate financial information creates real liability.

fintech vendor due diligence community bank

The Regulatory Dimension

The CFPB’s interest in chatbot doom loops isn’t idle. The Biden administration’s “Time Is Money” initiative explicitly called out financial institution chatbots that trap consumers in automated systems as a target for rulemaking. That effort stalled, but the underlying consumer frustration hasn’t.

Regardless of where regulation lands, community banks have a practical reason to get this right: regulators examining your customer service practices will look at chatbot interaction logs. A pattern of customers being unable to reach human assistance for disputes, fraud claims, or sensitive account issues is an examination finding waiting to happen.

community bank regulatory compliance strategy

The institutions that deploy AI as a deflection shield are creating risk. The ones deploying it as an escalation engine are building defensible, customer-first service infrastructure.

The Bottom Line

The chatbot trap isn’t about the technology being bad. The technology is genuinely good. The trap is in deploying it with the wrong goal.

A chatbot built to contain customers is a customer service tax. A chatbot built to escalate intelligently is a relationship accelerator.

Community banks have been saying for years that their advantage is relationships. The chatbot is where that claim gets tested in real time, at scale, at 11pm on a Saturday.

Design it to pass the test.